AI economics: As artificial intelligence workloads grow more demanding, large organisations are taking a closer look at how they run them in the cloud.

Uber’s expanded use of AWS-designed chips highlights a broader shift towards infrastructure choices that prioritise efficiency, cost control and scale.

Large enterprises are rethinking the way they run artificial intelligence in the cloud, as pressure grows to deliver high performance without allowing infrastructure costs to spiral. Uber is one of the latest companies to signal that change, expanding its use of custom chips designed by Amazon Web Services to support AI systems across its ride-hailing and delivery operations.

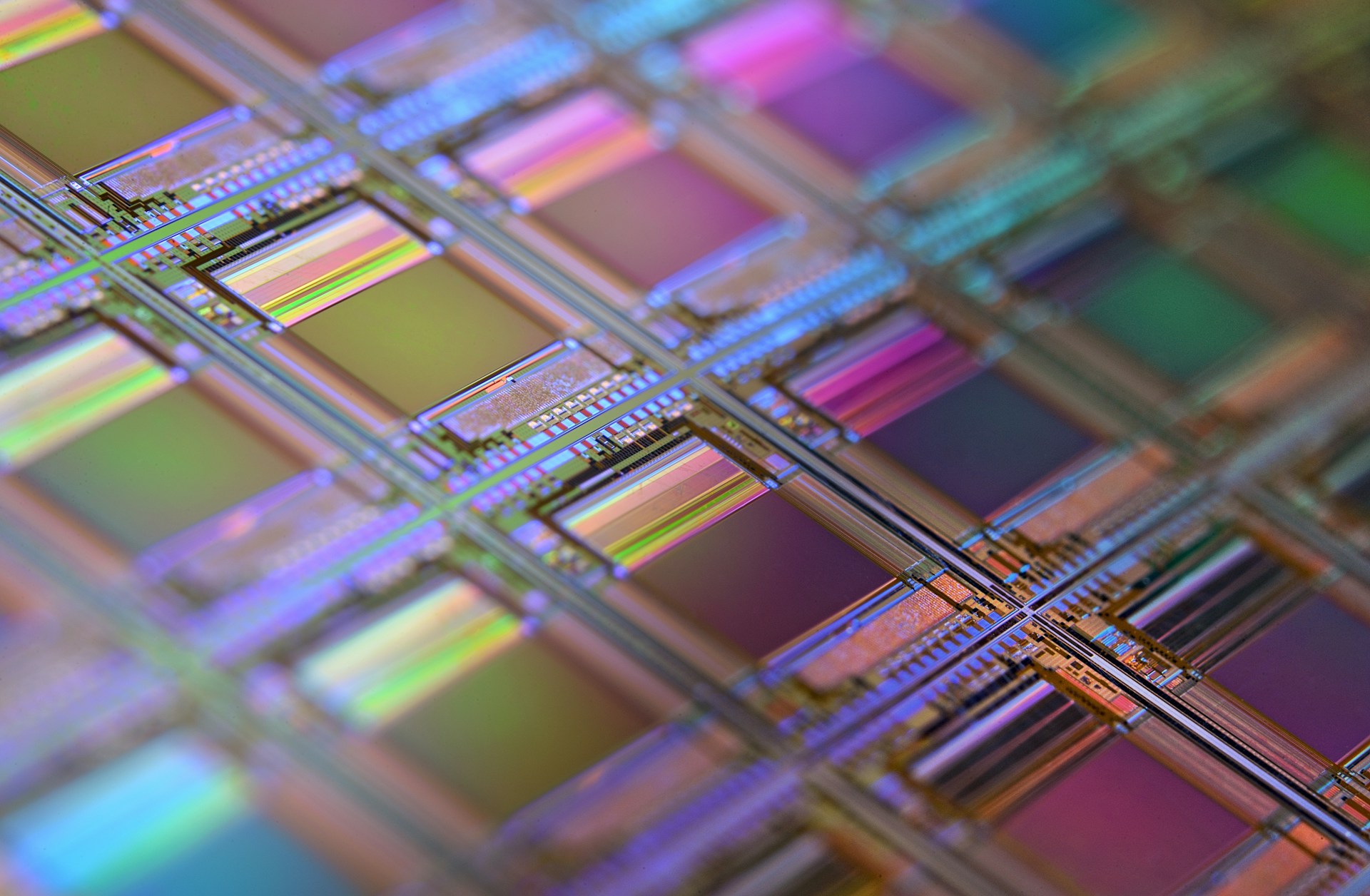

At the centre of this strategy are AWS processors such as Graviton and Trainium. According to the source material, Uber is increasing its use of these chips to power AI models and backend systems that support functions including driver and rider matching, journey time prediction, pricing and food delivery route management. These are all data-intensive tasks that require constant processing and regular updates, making them expensive to run at scale.

The growing appeal of custom silicon lies in its ability to improve price-performance. AWS positions Graviton as a more efficient alternative to traditional x86-based instances, while Trainium is designed specifically to reduce the cost of training machine learning models. For a business operating across dozens of countries and handling millions of transactions every day, even modest improvements in efficiency can translate into significant savings.

Uber’s use of AWS chips reflects a more mature phase in enterprise cloud adoption. Rather than simply moving workloads into the cloud, organisations are becoming more deliberate about how those workloads are structured, which processors they run on, and how models are tuned for performance and cost. The source material notes that Uber is applying AWS chips to both training and inference, the two key stages in the AI lifecycle. Training determines how models learn from data, while inference governs how they make decisions in live environments. Both carry a cost, but inference can be particularly expensive because it often runs continuously in production.

This matters because AI is no longer confined to experimentation or isolated use cases. Across sectors such as finance, retail and logistics, machine learning is being embedded into everyday operations for fraud detection, demand forecasting and customer service. As adoption broadens, the economics of AI infrastructure are becoming impossible to ignore. The challenge for IT leaders is not only how to scale AI, but how to do so without eroding the financial benefits these systems are meant to deliver.

Custom chips offer one route through that challenge, but they also introduce new complexity. ARM-based processors such as Graviton can require software changes, while specialised AI hardware such as Trainium may demand closer collaboration between engineering teams and cloud providers. Optimising workloads for specific silicon can produce better results, but it can also increase dependency on a single platform. For enterprises that value portability across multiple clouds, that trade-off may become a strategic concern.

That tension between efficiency and flexibility is becoming a defining issue in digital transformation. On one hand, cloud providers are investing heavily in their own processors to deliver better control over pricing and performance. On the other, enterprises risk becoming more tightly coupled to those ecosystems if they build their AI strategies around proprietary hardware. Uber’s move illustrates how real these trade-offs have become. The company is not stepping back from cloud, but leaning further into specialised cloud infrastructure to support critical AI-driven services.

What makes this shift notable is that it signals a change in mindset, not just in tooling. Infrastructure is no longer a background consideration in AI strategy. It is becoming a competitive lever. For CIOs, CTOs and transformation leaders, the question is not simply whether to adopt AI, but how to run it efficiently enough to sustain long-term value. In that context, custom silicon is moving from a niche option to a mainstream part of the cloud conversation.

Uber’s adoption of AWS chips may be only one example, but it captures a broader enterprise reality. As AI workloads expand, businesses are under pressure to optimise every layer of the stack. General-purpose compute will remain important, but it is increasingly being complemented by hardware designed for specific workloads. The future of cloud-based AI is likely to be shaped not just by smarter models, but by smarter infrastructure choices.